Blog

A Sticky Note Hack for Finishing Things

There’ve been many moments over the past few months when I’ve felt haunted by the things I would rather be doing. An idea floats by—something that in a normal time I’d read about that night, or spend a Saturday making—but that found me totally occupied. I tried batting them away at first, but they’d come back. It was distracting and kind of distressing. It felt like they were my subconscious forcing me to consider that, if I couldn’t engage with what my mind actually thought was interesting, maybe I was doing something wrong.

Then I realized that they were only coming back because they didn’t want to be forgotten. So I started writing them down, as soon as they’d arrive, one to a sticky note. And they stopped coming back. I got a quiet my mind and a physical manifestation of what I was working toward. There’s probably a post about this idea somewhere on 43folders, but I’m writing this anyway in case it finds someone else with the same problem.

At this point I don’t remember much of what’s in the pile (it’s about a pad and a half, plus some mail I didn’t have the bandwidth the deal with), and I’ve spent my first couple unoccupied days cleaning my apartment instead of looking through them. But it worked. I’m here, and they’re there, ready for tomorrow.

I Successfully Defended My PhD

Keep Untracked Files Handy with a Global .gitignore Pattern

I often find myself in a situation where I have a file that I want to keep around, but don’t want to commit to the repository. Things like notes (todo.txt) or examples (bug_repro.py) or maybe an uncompressed version of an image (banner_xxl.jpg). They could live somewhere outside the source tree, but I like to keep them close to the code they relate to so I don’t forget about them.

Instead of cluttering every repo’s .gitignore with one-off rules, I adopted the prefix _#_ for any file or directory I want Git to ignore. This way I can just add the pattern to the global .gitignore rules (at $HOME/.config/git/ignore by default on macOS):

_#_*

Simple, but it keeps my workflow clean and my untracked files where I can find them.

Paper on Narrating Robot Experience at CoRL 2024

Our first stab at turning robot experience into a coherent narrative is at CoRL this week. Zihan put up a great overview here and included a ton of detail in the appendices. We hope that others will pick up on similar applications of LLMs as summarizers and narrators for robot experience.

Also appearing, a workshop paper thinking about how LLMs might help developers edit robot state machines.

"Cascade Lane" Becomes Opinion of UW Student Body

The ASUW Student Senate passed R-30-20, a “Resolution Calling for the Naming of Cascade Lane” which I sponsored. It

calls for the official naming of the area deemed “Cascade Lane,” the asphalt path from the southeast steps of Red Square that extends to the edge of the concrete path circling Drumheller Fountain. The bill’s sponsor aims to prioritize its repair by first naming the path, which experiences heavy foot traffic and is in need of repair.

One of the Senate’s functions is to find student consensus on university matters, so with this, Cascade Lane becomes the opinion of the student body.

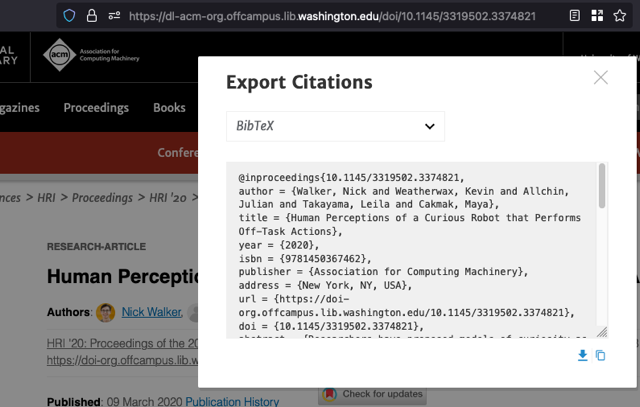

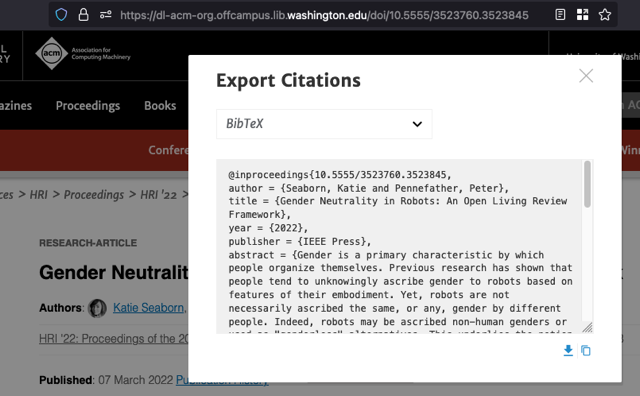

The ACM Digital Library Has a Fake DOI Problem

The ACM Digital Library sometimes presents fake DOIs, and it’s spreading broken links across the web.

DOIs are unique, permanent identifiers for research artifacts. Publishers assign a DOI to a paper, then https://doi.org/ resolves it to the paper’s landing page in perpetuity. When publishers fold, they transfer stewardship to another entity and the link lives on. They’re academic permalinks, so you can find them in citations, on CVs, and in the metadata of papers themselves.

I noticed ACM DL’s odd DOIs while looking at the HRI 2022 proceedings, where every paper’s page has a URL that looks like https://dl.acm.org/doi/10.5555/3523760.3524000. The DOI implied by this URL, 10.5555/3523760.3524000, is not real and does not resolve. Turns out, ACM uses the 10.5555 prefix anywhere that it cross-lists content from another publisher, as in this case with IEEE. Each of these documents has a real DOI, issued by IEEE and resolvable to a page in IEEEXplore, but ACM won’t tell you what it is. This paper’s is 10.1109/HRI53351.2022.9889569.

Exporting the citation for these cross-listed papers omits the DOI field, but the BibTeX key includes the fake DOI. This makes it easy to mistakenly use the fake DOI as the doi= field, especially since BibTeX keys are usually formatted as authorYYYYtitle.

Both entries appear to be keyed with DOIs, but the DOI for the paper published through IEEE Press is not real.

Nothing here is technically incorrect, but it’s misleading. You can find dozens of these fake DOIs being treated as real in the wild. I found them on lab web pages, in metadata, and even published papers.

It seems likely that this is happening because the Digital Library uses DOIs as natural keys for its entries. This is another good example of why you shouldn’t use natural keys, even supposedly unique and identifying ones like DOIs. If placeholder values are used (tsk-tsk), they can mislead users into treating them as genuine identifiers. Users wouldn’t make the same mistake if every page were keyed with a UUID.